Emmental and AI

AI innovations like the chatbot ChatGPT are enthusiastically celebrated and they trigger fears. Ethical discussions on AI should never revolve around the technology alone, says bioethicist Samia Hurst. Above all, it is important that the social, political and economic frameworks in which they are used are right.

by Roger Nickl.

© Celia Lazzarotto

Enthusiasm about unimagined possibilities – and fear of them at the same time. The reactions to the chatbot ChatGPT, which was launched last autumn, have been ambivalent. That says a lot about ourselves. “When new, disruptive technologies come to market, there is often this initial enthusiasm – followed by concerns and fears,” says Samia Hurst. “Enthusiasm tells us something about our dreams – but as we know, dreams can also be very worrying.” For example, AI applications promise to take a lot of work off our hands, but they also threaten jobs and thus people’s existence. And they increasingly give the tech companies that develop them power over our lives and our data. We need to take these fears and concerns seriously, according to Hurst, and we should find solutions to get them out of the way as much as possible.

Samia Hurst is a bioethicist working at the University of Geneva and the NCCR Evolving Language. She became known to a wider Swiss audience during the pandemic as vice-president of the federal government’s scientific Covid task force. She is currently researching questions of scientific responsibility and the ethical handling of technological and scientific risks. This also includes the latest developments in artificial intelligence.

Like a Swiss army knife

ChatGPT is like a kind of Swiss army knife, says Hurst. The chatbot has numerous capabilities. Some are helpful, such as quickly summarizing longer texts, like scientific articles; others are more worrisome, such as generating disinformation. “It is difficult to imagine all the possible benefits and risks that lie dormant in such AI tools,” says the ethicist. “Our limited imagination is therefore probably one of the greatest dangers in the rapid current development.“

This makes it all the more important that the opportunities and risks of AI are analysed and discussed as broadly as possible. “It should never be about the technology alone, that is often forgotten,” says Samia Hurst, “the social and economic circumstances in which the technology is applied should be central.” Technological, but also scientific innovations are rarely good or bad per se – but the context in which they are used can cause positive or negative consequences for the people involved.

Radiologists and taxi drivers

Hurst gives an example from medicine: newly developed genetic tests can tell whether someone has a particular risk of getting a certain disease. The positive result of such a test could now lead to a person not being hired by an employer or not being accepted by an insurance company – unless there is a legal provision and regulations to prevent discrimination. “So the opportunities and risks of the same test are extremely different in a context where there is protection for discrimination and one where there is not,” says the ethicist.

Another example is AI-powered tools in radiology that can analyze X-rays and CT scans faster and more accurately than doctors. “This is a great invention,” says Samia Hurst, “because it improves diagnostics for patients – at least for those who have access to them.” But it also means that radiologists now have less work and may have to look for a new job outside their field. “In their case, this is probably not so risky,” Hurst assumes, “because doctors are in demand on the job market.” The situation would be different, however, if taxi drivers were to lose their jobs in the future because of autonomous vehicles. Their livelihoods would then be at risk. “Depending on the social and economic structures in which someone is embedded, the risk can be greater or lesser.”

FDA for IT

In order to make the use of AI as fair as possible, extensive social and economic clarifications and accompanying measures are necessary. “We can’t leave that to the AI developers,” says Samia Hurst, “that’s not their expertise either.” In order to identify the appropriate accompanying measures, however, the common homework must first be done, namely an evaluation of opportunities and risks that is as broad as possible. “Such comprehensive clarifications are needed if we want to get to the bottom of potential risks,” states Hurst. “However, humanity is not very good at such things at the moment.“

In view of the disruptive potential of AI, discussions about the future are also necessary: For example, on the question of how we will work in the future. Or what we want to do when technology takes more and more work off our hands. Will we then need an unconditional basic income? Should AI applications be taxed to redistribute some of the profits to the losers? And is there a need for institutions similar to the Food and Drug Administration (FDA), which in the USA is responsible for the approval of drugs, among other things? “A kind of FDA for IT,” imagines Samia Hurst, “which, as an independent body of experts with a transparent catalogue of criteria, can permit or prohibit certain applications.“

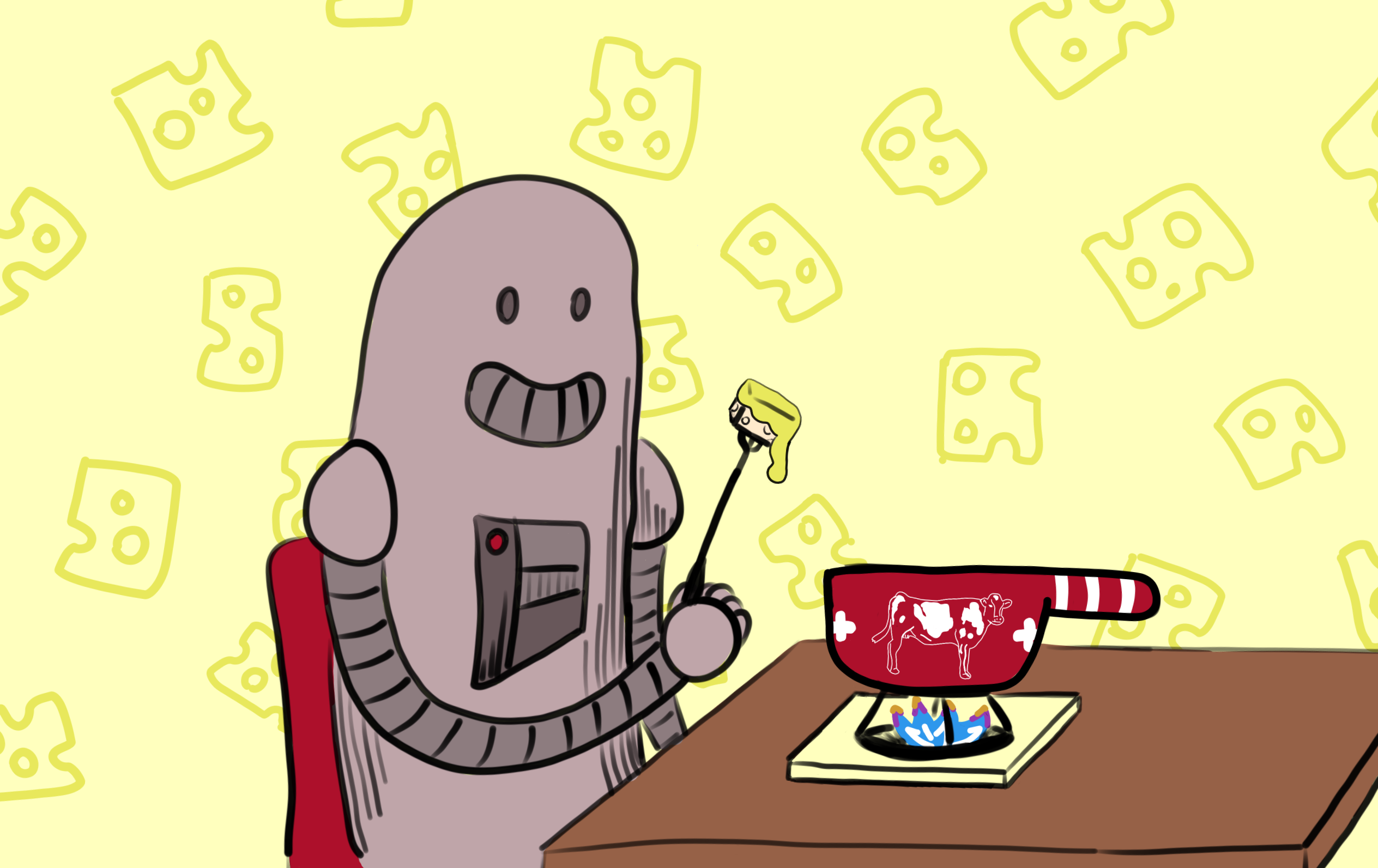

Fewer risks with the Swiss cheese model

Samia Hurst is also involved in risk analysis and prevention in her research with her team. For example, she is currently investigating how to elicit and minimize the risks of potentially dangerous scientific and technological developments as best as possible. “There is an ongoing discussion on so-called dual-use research,” says Samia Hurst, “it deals with questions like: What should you do with a paper that shows how a pathogen can be made more pathogenic – should you publish it or would you rather not?” On the one hand, the topic could be scientifically interesting and valuable; on the other hand, it could be misused by bio-terrorists.

Such dual use is inherent in many scientific and technological innovations and makes them precarious from a security and ethical point of view. One example of this is drones, which can be used for good purposes in civilian life, but also as weapons in war. “That is why it is important to identify and analyze all areas in which innovations and scientific results are useful or harmful,” says Hurst. Moreover, the researcher emphasizes, an efficient model is needed with which risks can be minimized.

The English psychologist James Reason has developed such a model – the so-called Swiss cheese model. It says that different layers of protection, stacked on top of each other, can reduce the occurrence of an undesirable event, even though each individual layer of protection is flawed. Like a thin slice of Emmental cheese, it has holes. However, if you lay slices of cheese on top of each other – to stay with the analogy – and make sure that the holes do not lie on top of each other, a more effective risk protection can be built up. “Our idea now is to develop such a model to protect against potentially harmful research results. Part of this multi-layered protection model are the researchers, scientific associations, journal publishers, funders and the state,” says Samia Hurst. “If they play well together, risks could probably be reduced.” Hurst’s research on this topic is still in its infancy – the same applies to the discussions about an ethical and responsible approach to chatbots and AI. A lot will depend on them.